TL;DR:

- AI in mobile apps can improve trial conversion but often underperforms on long-term retention and churn reduction. Successful implementation requires careful focus on user needs, responsiveness, ongoing governance, and continuous feedback loops. Partnering with experts helps organizations build secure, meaningful AI-driven experiences that deliver sustained value over time.

AI in mobile apps is not the instant engagement multiplier many organisations expect it to be. RevenueCat's 2026 data reveals a striking paradox: AI-powered apps may convert trials to paid subscriptions at higher rates, yet they can underperform non-AI apps on long-term retention and churn. For UK businesses investing in mobile, that gap between expectation and reality matters enormously. This guide cuts through the noise to explain what AI genuinely delivers in mobile applications today, where the risks lie, and how to build a strategy that creates lasting value rather than short-lived novelty.

Table of Contents

- Core roles of AI in modern mobile apps

- How AI impacts user experience and engagement

- Key challenges: risk, security and governance in AI-enabled apps

- Getting it right: real-world examples and lessons for UK organisations

- The real impact: what most AI app strategies miss

- How Pocket App can help you harness AI in your mobile app

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Multifaceted advantages | AI drives personalisation, support, security, and smarter functionality in mobile apps. |

| Performance trade-offs | AI can boost trial conversion but does not guarantee long-term user retention. |

| Security is essential | Effective AI governance and risk controls must extend beyond typical app security measures. |

| UK case studies are instructive | Learning from examples like the GOV.UK app helps businesses avoid common pitfalls. |

| Strategic deployment | Successful AI adoption in mobile apps relies on clear goals, expert guidance, and measuring real user impact. |

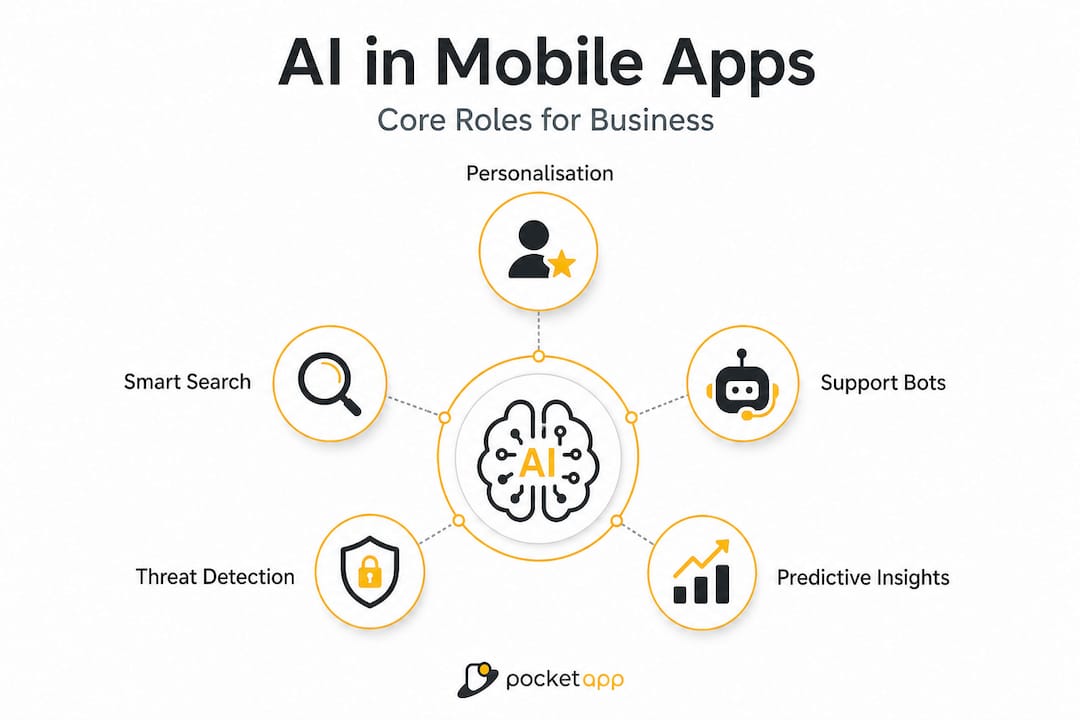

Core roles of AI in modern mobile apps

Now that we've set the scene, let's break down the main roles AI plays in real-world mobile apps. Understanding these categories helps your team ask sharper questions before committing budget and development time.

According to research on AI in mobile UX, the most common AI functions in UK mobile apps include:

- Personalisation and recommendations: Surfacing relevant content, products, or services based on user behaviour, purchase history, or location context.

- AI-powered search: Moving beyond keyword matching to semantic understanding, so users find what they need with natural language queries.

- Conversational support: Chatbots and voice assistants handling tier-one queries, appointment booking, and transactional tasks without human agents.

- Document and image understanding: Automatically extracting data from receipts, ID documents, medical images, or product photos.

- Security and fraud detection: Identifying anomalous behaviour patterns in real time, reducing fraud in financial and e-commerce apps.

These capabilities appear across consumer, enterprise, and public-sector contexts. The GOV.UK app, for example, uses AI in UK government apps to personalise content and improve navigation for millions of citizens. Meanwhile, enterprise apps deploy document understanding to automate manual data entry, saving meaningful hours per employee each week. If you are exploring AI use cases for your own organisation, these five categories are a practical starting framework.

| AI use case | Primary benefit | User impact | Typical sector |

|---|---|---|---|

| Personalisation | Relevance of content | Higher engagement per session | Retail, media, healthcare |

| AI search | Speed and accuracy | Faster task completion | E-commerce, public services |

| Conversational support | 24/7 availability | Reduced support wait times | Financial services, utilities |

| Image/document understanding | Automation of data entry | Lower friction in onboarding | Insurance, logistics, NHS |

| Fraud detection | Risk reduction | Increased trust and safety | Banking, payments, retail |

AI-driven personalisation is consistently one of the highest-ROI features when implemented correctly, because it directly reduces the effort users must exert to find value. The key phrase there is "when implemented correctly," which leads us neatly into the next consideration.

How AI impacts user experience and engagement

With an understanding of what AI can do, it is crucial to assess how these capabilities translate into actual user engagement and retention. The data here is more nuanced than many technology vendors would have you believe.

Latency is the most underrated factor in AI feature adoption. When an AI-powered recommendation or search result takes more than 300 milliseconds to appear, users begin abandoning the feature entirely. Empirical benchmarks for AI apps show that perceived responsiveness is the single biggest driver of whether users continue engaging with AI features after their first session. If your AI backend is slow, users will simply stop using it, regardless of how accurate or sophisticated the underlying model is.

Trial-to-paid conversion is where AI currently shines. AI apps convert at 8.5%, outperforming their non-AI counterparts in subscription funnels. That is a meaningful commercial advantage and it explains why so many product teams are enthusiastic about launching AI features. The problem is what happens next.

| Metric | AI-powered apps | Non-AI apps |

|---|---|---|

| Trial-to-paid conversion | 8.5% | Lower |

| Annual retention rate | 21.1% | 30.7% |

| Median churn speed | 30% faster | Baseline |

| User trust (perceived) | Variable | More stable |

AI-powered apps show annual retention of just 21.1% compared with 30.7% for non-AI apps, and they churn 30% faster at the median. That is a significant long-term commercial risk if your revenue model depends on subscription renewals or repeat engagement. The implication is clear: AI can get users through the door faster, but keeping them engaged requires far more than a clever algorithm.

The gap likely stems from a few causes. AI features can feel inconsistent when models are retrained or when personalisation becomes intrusive rather than helpful. Users also develop scepticism when AI suggestions miss the mark repeatedly. For optimising app engagement over the long term, behavioural feedback loops and user-controlled preference settings matter as much as the underlying model quality.

Pro Tip: Prioritise real user metrics over theoretical AI capability when designing new features. Trial conversion rates flatter AI features, but cohort retention data tells you whether they are genuinely delivering sustained value.

Understanding the AI integration impact on your specific user base requires instrumentation from day one. Set up cohort tracking before you launch any AI feature so that you have a baseline for honest comparison.

Key challenges: risk, security and governance in AI-enabled apps

Strong user engagement is important, but so is protecting your app from the unique risks introduced by AI. Standard app security practices are necessary but not sufficient when AI models are in the stack.

NIST's 2025 guidance on trustworthy AI identifies several threat categories that are specific to AI and machine learning integrations:

- Adversarial machine learning (ML): Attackers craft inputs designed to fool your model into producing incorrect outputs. In a fraud detection context, this could mean manipulating transactions to evade detection entirely.

- Model abuse: Legitimate-looking API calls used to extract proprietary training data or reverse-engineer model behaviour for competitive or malicious purposes.

- Privacy attacks: Membership inference attacks that allow bad actors to determine whether specific individuals were present in the training dataset, creating significant GDPR exposure for UK organisations.

- Data poisoning: Corrupting training data over time to degrade model performance or introduce systematic biases that benefit an attacker.

These threats go well beyond the traditional app security concerns of SQL injection, insecure data storage, or weak authentication. They require a different mindset entirely.

NIST draws an important distinction between governance requirements for predictive AI models (which analyse historical data to forecast outcomes) and generative AI models (which produce new content). Generative models carry higher risk profiles because their outputs are less predictable and harder to audit. If your app uses a large language model (LLM) for conversational support, for example, you need content safety controls, output monitoring, and clear escalation paths that simply are not necessary for a rules-based chatbot.

"Organisations must treat AI systems as dynamic components that evolve over time, applying security controls across the full AI lifecycle rather than treating deployment as a one-time event." — NIST Trustworthy and Responsible AI Report (2025)

Practical steps your team should put in place before shipping any AI feature:

- Lifecycle security controls: Treat AI model training, deployment, and retraining as distinct risk phases, each requiring security review.

- Regular adversarial audits: Commission periodic red-team exercises specifically targeting your AI components, not just general penetration testing.

- Staff training on attack vectors: Developers and product managers should understand how adversarial ML works at a conceptual level so they can spot design decisions that increase exposure.

- Input validation and output filtering: Apply strict validation to everything that enters your AI pipeline, and filter outputs before they reach the user interface.

Consulting advanced app security expertise early in the design process is considerably cheaper than remediating a privacy breach or model compromise post-launch.

Pro Tip: Treat AI as a dynamic co-pilot in your app rather than a static feature. Models change, threat landscapes evolve, and your governance framework needs to keep pace with both.

Getting it right: real-world examples and lessons for UK organisations

Looking at challenges is one thing, but what does successful AI-enabled mobile app adoption look like in the UK context? Several organisations have already navigated the early learning curve and their experience is instructive.

The GOV.UK app beta is arguably the most publicly documented example in the UK public sector. The GOV.UK app uses AI-powered search and personalisation based on prior user behaviour, with features achieving broad adoption across its beta user base. Critically, the Government Digital Service (GDS) team iterated continuously based on user feedback, adjusting personalisation weights and search parameters rather than assuming the initial model would be optimal.

In the enterprise space, logistics and field service companies have deployed image understanding to let engineers capture asset data through their phone camera rather than completing paper forms. Accuracy rates for data extraction now routinely exceed 95% in well-trained deployments, eliminating a category of manual work entirely. In consumer apps, retail brands are using on-device AI to power visual search, letting shoppers photograph a product they see in the world and instantly find it in the catalogue.

The common thread across successful deployments is not the sophistication of the AI model. It is the quality of the feedback loop between user behaviour and product iteration. Here are the key lessons:

- Define success before launch. Decide whether you are optimising for conversion, retention, task completion speed, or support deflection before you write a single line of AI code. Vague goals produce unmeasurable outcomes.

- Start narrow, then expand. The GOV.UK team launched AI features for a specific subset of high-frequency tasks rather than attempting to personalise everything at once. Narrower scope means faster learning.

- Instrument everything from day one. You cannot improve what you cannot measure. Cohort retention, feature adoption rates, and error rates should all be tracked from the first production release.

- Communicate AI to users honestly. Users who understand that a feature is AI-powered and that it learns over time are more forgiving of early imperfections and more likely to provide the feedback signals the model needs.

- Budget for ongoing model maintenance. AI is not a deploy-and-forget investment. Model drift, new attack vectors, and evolving user expectations all require ongoing development budget.

Organisations that invest in tailored mobile experiences aligned to genuine user needs consistently outperform those that bolt on AI features for their marketing appeal.

The real impact: what most AI app strategies miss

Drawing on these lessons, let us step back for a frank perspective on what most strategies miss when bringing AI to mobile apps.

The honest truth is that most organisations approach AI in mobile with the wrong primary question. They ask "what can AI do for us?" when they should be asking "what do our users genuinely struggle with, and can AI remove that friction?" Those questions sound similar but they lead to completely different product decisions. The first produces feature lists. The second produces outcomes.

We have seen this pattern repeatedly across sectors. A financial services client ships an AI-powered insights dashboard because competitors have done so. User adoption plateaus after week two because the insights are accurate but not actionable. No one told the model that this particular user base makes financial decisions quarterly, not daily. The AI is right, and it is completely irrelevant.

"Sustained user value comes from understanding context, iterating on real behaviour, and building earned trust. It cannot be shortcut with a better model."

The retention data backs this up. The fact that AI apps churn faster than non-AI apps is not a condemnation of the technology. It is evidence that early adopters are shipping AI features before they truly understand their users' context. Conversion looks good because novelty drives trials. Retention falls because novelty fades and the underlying value was never there.

The organisations that get this right reframe their KPIs entirely. Instead of celebrating trial conversion spikes at launch, they track cohort retention at 30, 60, and 90 days. Instead of measuring feature usage volume, they measure whether users who engage with an AI feature report higher satisfaction and return more frequently. Staying current with app development trends for 2026 matters, but chasing trends without anchoring them to user needs is how organisations burn budget without building loyalty.

The most valuable AI features are often invisible. When personalisation is working well, users do not notice it as technology. They just notice that the app feels effortless. That invisibility is the goal.

How Pocket App can help you harness AI in your mobile app

If your organisation is ready to move forward with AI-powered mobile solutions, here is how partnering with domain experts makes the difference.

Getting AI right in mobile requires more than technical capability. It requires a strategic understanding of your users, honest assessment of where AI adds genuine value, and the security and governance expertise to deploy responsibly. That is a broad set of skills that few in-house teams can assemble quickly.

At Pocket App, we bring together product strategy, UX design, AI integration, and lifecycle security support for organisations building serious mobile solutions across retail, healthcare, enterprise, and public sector. Our work on bespoke mobile app development draws on a portfolio of over 300 projects, giving us practical pattern recognition that accelerates your path to outcomes rather than features. Whether you are exploring your first AI feature or scaling an existing deployment, we provide the honest, experience-grounded consultancy that helps you invest where it counts. Find out more about how we build with AI and get in touch to discuss your project.

Frequently asked questions

What are the main benefits of adding AI to a mobile app?

AI features such as personalisation, search, and security are the most impactful benefits in UK mobile apps, reducing user friction and improving task completion rates across consumer and enterprise contexts.

Does integrating AI always improve mobile app retention?

No. AI-powered apps underperform on annual retention versus non-AI apps, recording 21.1% versus 30.7%, which means AI may drive initial conversion while actually increasing long-term churn risk.

What security risks come with AI in mobile apps?

AI and ML are vulnerable to adversarial attacks including data poisoning, model abuse, and privacy inference attacks, all of which require specialist end-to-end controls beyond standard app security practice.

How can UK organisations get started with AI in mobile apps?

Begin by identifying the specific user friction points where AI can remove genuine effort, then pilot a narrowly scoped feature with experienced app developers who can instrument outcomes from day one.

Recommended

- Pocket App | Harnessing AI in Mobile App Development: Enhancing Functionality and User Experience

- Why mobile apps for business drive operational excellence

- Pocket App | AI Integration in Mobile Apps: Enhancing Functionality and Engagement

- App development trends 2026: key insights for business leaders